Ignotum final post

This is our final blog entry here on the Re-FREAM website, but before we dive into it, we would like to thank all of our partners and collaborators:

Fraunhofer IZM: Christian Dils, Max Marwede, Robin Hoske, Sigrid Rotzler, Lars Stagun, Dr. Rafael Jordan, Kamil Garbacz, Sebastian Hohner

Stratasys: Naomi Kaempfer, Yossi Siso

Empa: Agnes Psikuta

Profactor: Pavel Kulha

Wear It Berlin: Manon Montant, Ioana Puscasu, Thomas Gnahm

Other collaborators: Markus Mau, Mira Thul-Thellmann, Tim Schütze

Thank you all for your great help!

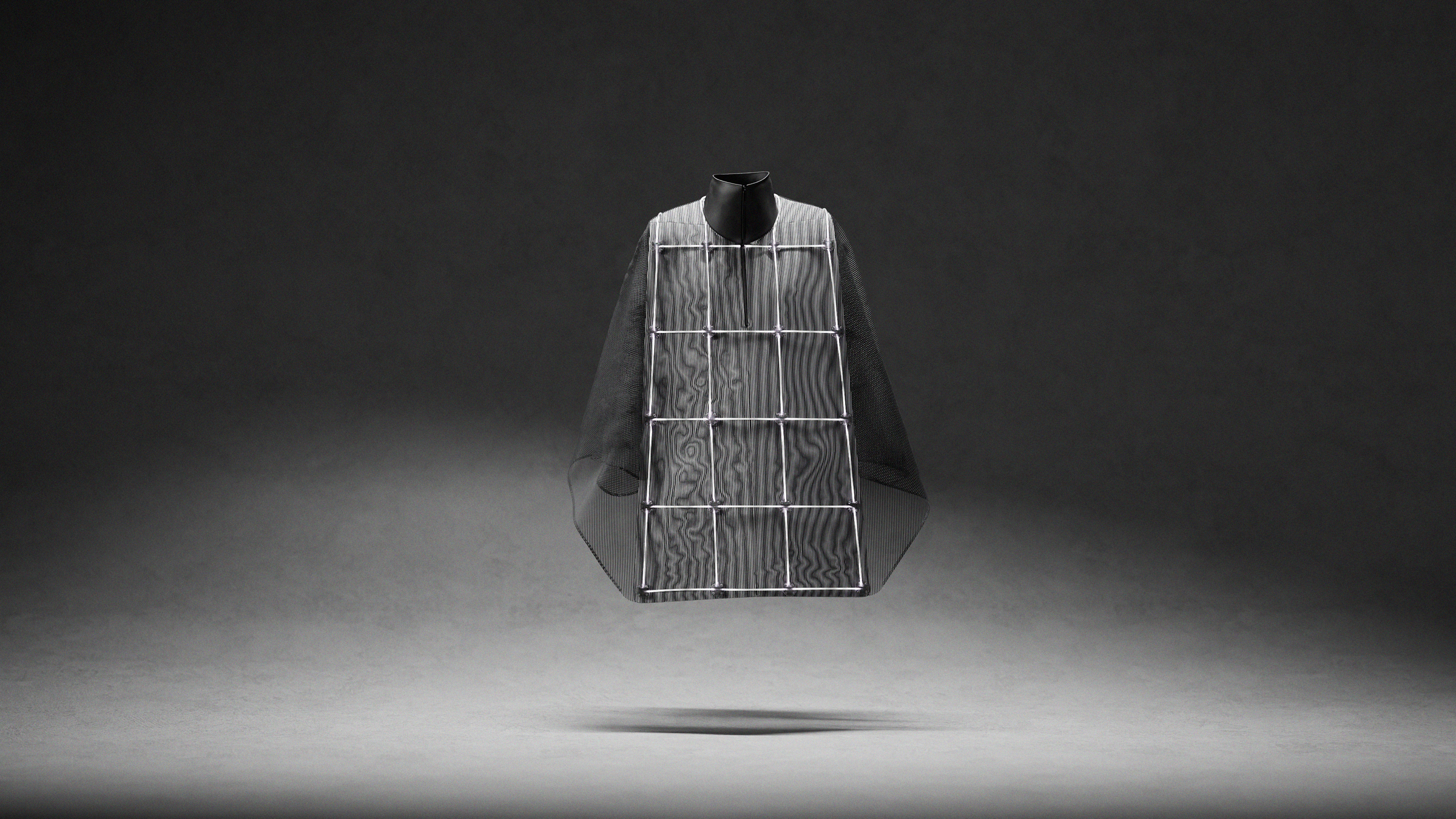

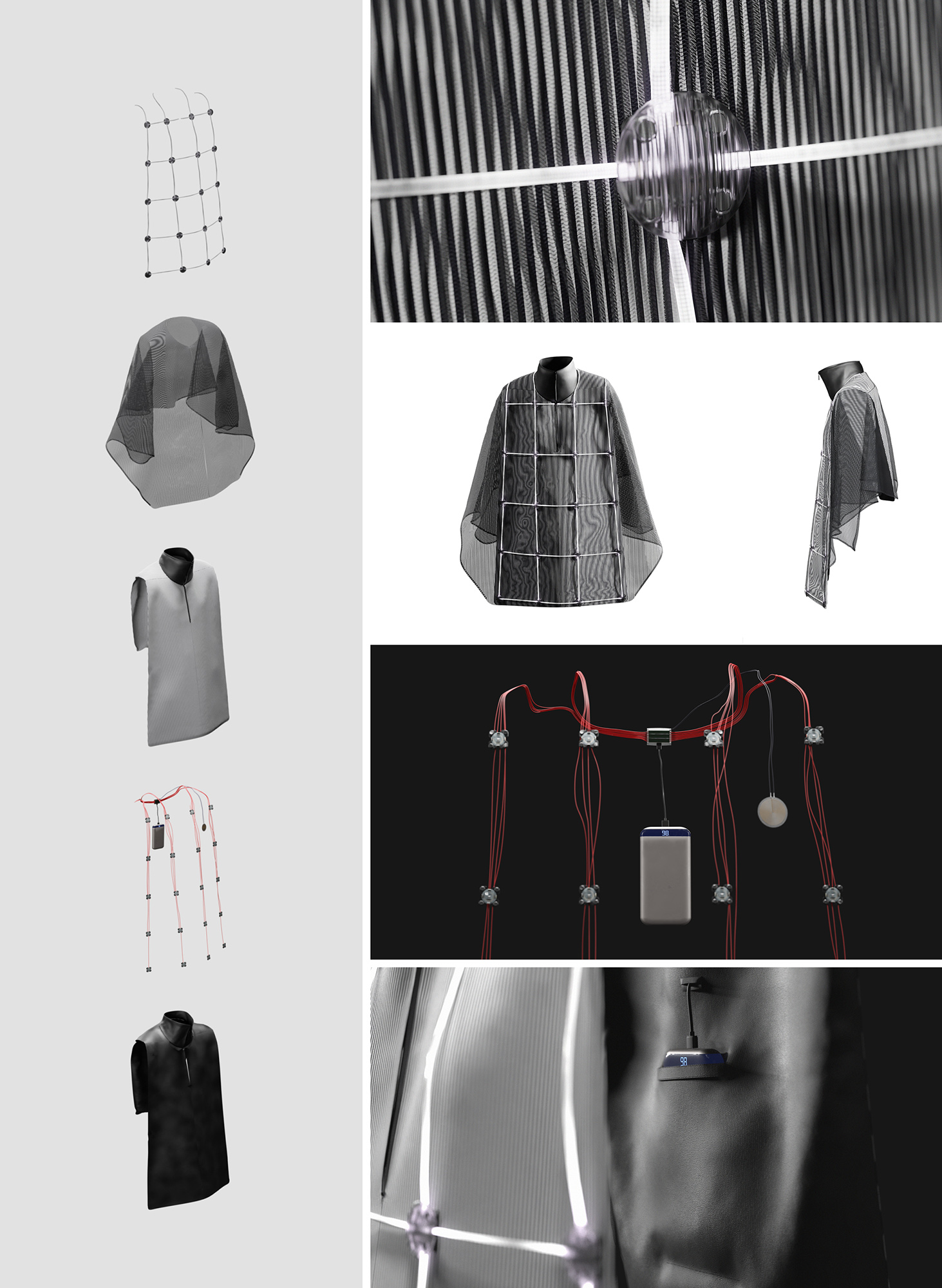

Now, besides the prototype, we also worked on a final design concept that we would like to share with you in form of computer renders:

Besides the light guides, it is pretty close to the final prototype. In the design study, we are showing what 3D printed light guides could look like instead of light fibres. It would also have more LEDs, although less powerful ones compared to the ones in the prototype. The shown buttons attach to the base layer via magnets.

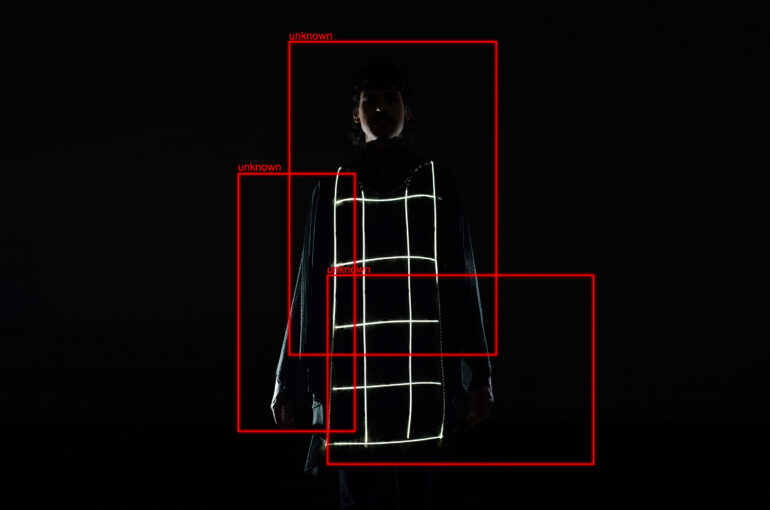

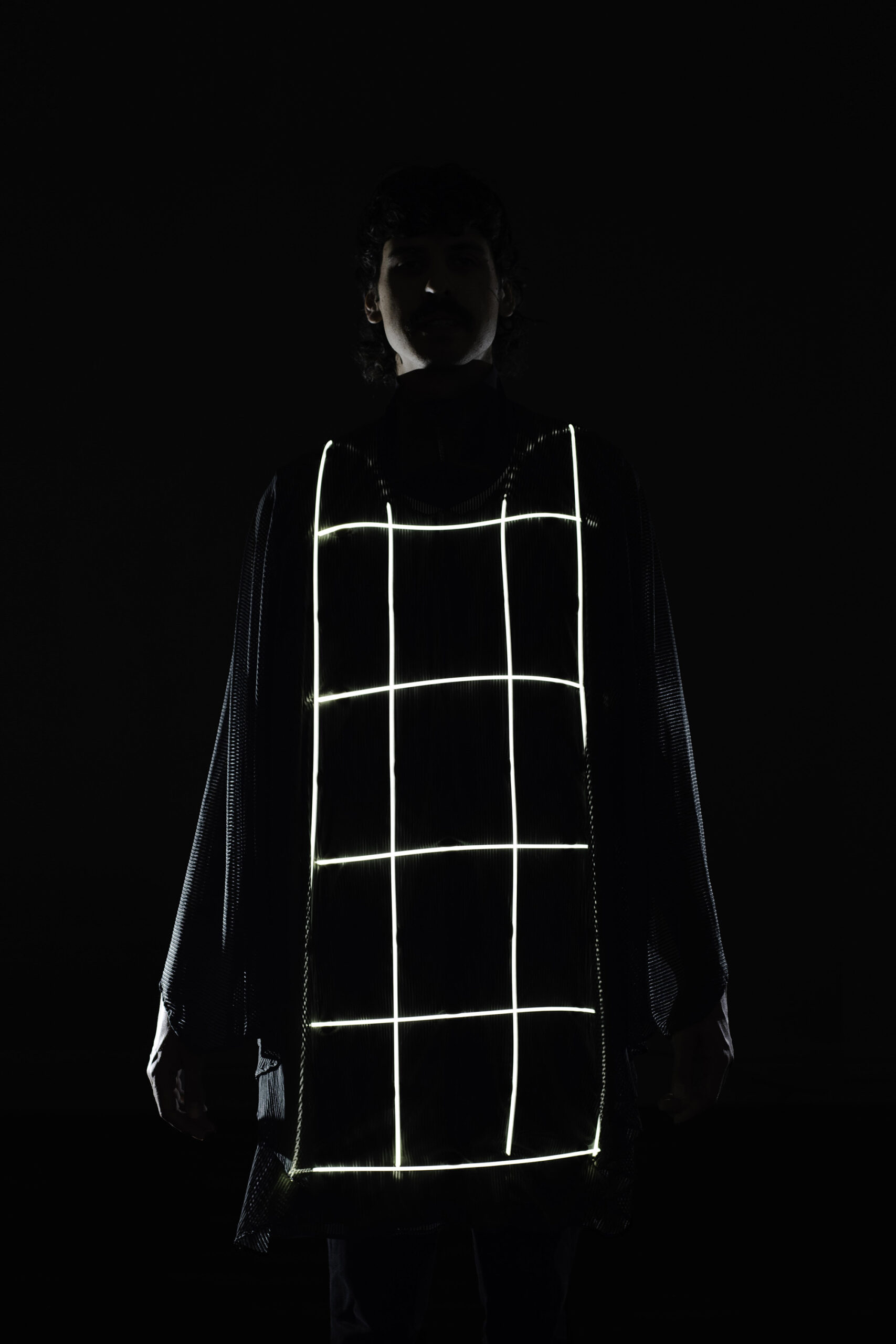

Ok, so here are the final images (taken by Tim Schütze) featuring our working Ignotum prototype:

Before we went out shooting the prototype, we first wanted to check if it still worked. We tested it in different settings and with different backgrounds, and even in quite challenging (meaning with strong contrasts as in the image above) the poncho worked well in bringing the recognition numbers down to a level where they are not strong enough for further processing.

We went shooting at the central station in Berlin, as well as the Mall of Berlin.

Besides the odd curious looks, people were not too bothered by seeing someone with a glowing poncho walking around.

And here is the final shot which was taken at night.

All in all, it was a fantastic journey and a great opportunity to work on the project. We learned a lot from our partners and collaborators.

Our next steps now in the coming weeks and months are to make it as much as it is possible public, to create awareness around the topic and maybe also to be an inspiration for a DIY project. The prototype as it is is working well against “our” A.I. (which is up to current standards), but of course, we can not be sure if it stays like this forever. A.I. algorithms are slicing images up into little boxes and are analyzing each box separately before making an assumption. Our hack attacks this approach on a basic level by overlaying a different box system. So we are fairly confident that our design should work with many A.I.s, but it is impossible to say for sure if this is really the case.

Please do get in touch if you have any questions or ideas. Many thanks!